|

8/10/2023 0 Comments Filebeat and elasticsearch

The “Ingest Attachment” plugin is based on open source Apache Tika project. Users use the web application user interface to search for documents (like PDF, XLS, DOC etc.) that are ingested into the Elasticsearch cluster (via “workflow 1”).Įlasticsearch provides “Ingest Attachment” plugin to ingest documents into the cluster. Depending on the choice of programming language and application framework, you can pick the appropriate API l to interact with the Elasticsearch cluster. The web application can be written in Java (JEE), Python (Django), Ruby on Rails, PHP etc. The web application in turn behind the scene uses the Elasticsearch API to send the files to Ingest node of Elasticsearch cluster. Users upload the files using the Web application user interface. The above diagram shows two user driven workflows in which Elasticsearch cluster is used by web applications. A node is a physical server or a virtual machine (VM)

The diagram below shows how data is logically distributed across an Elasticsearch cluster comprising of three nodes. You can replicate shards across multiple replicas to provide fail-over and high availability. Shards allow parallel data processing for the same index. A document belongs to one index and one primary shard within that index. Elasticsearch under the hood uses Apache Lucene as the search engine for searching documents it stores in its data store.ĭata (more specifically documents) in Elasticsearch are stored in indexes which are stored in multiple shards.

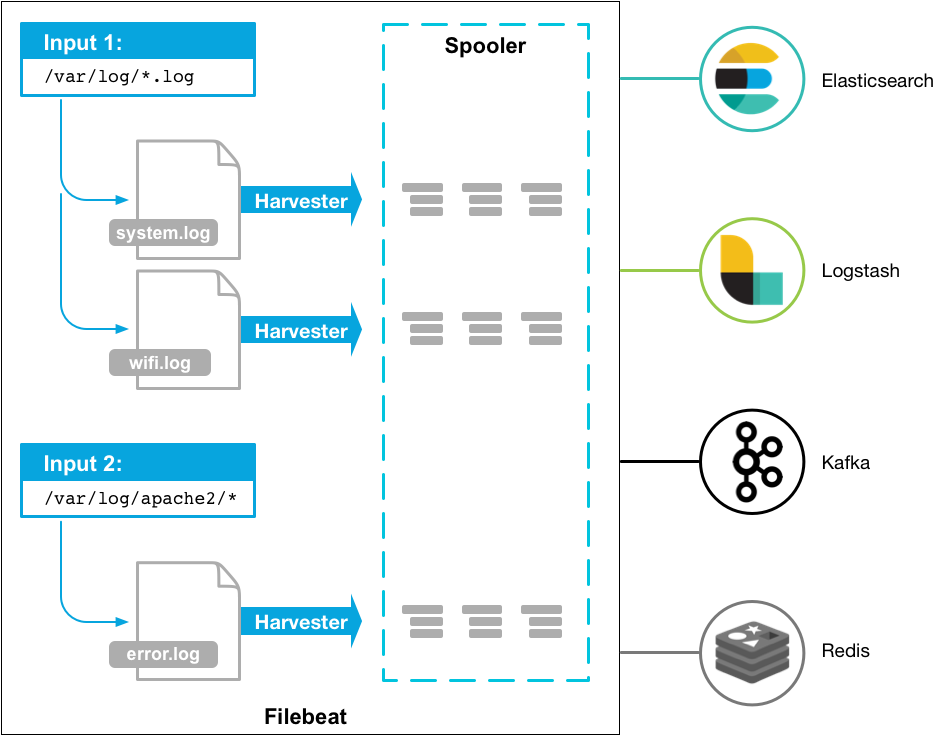

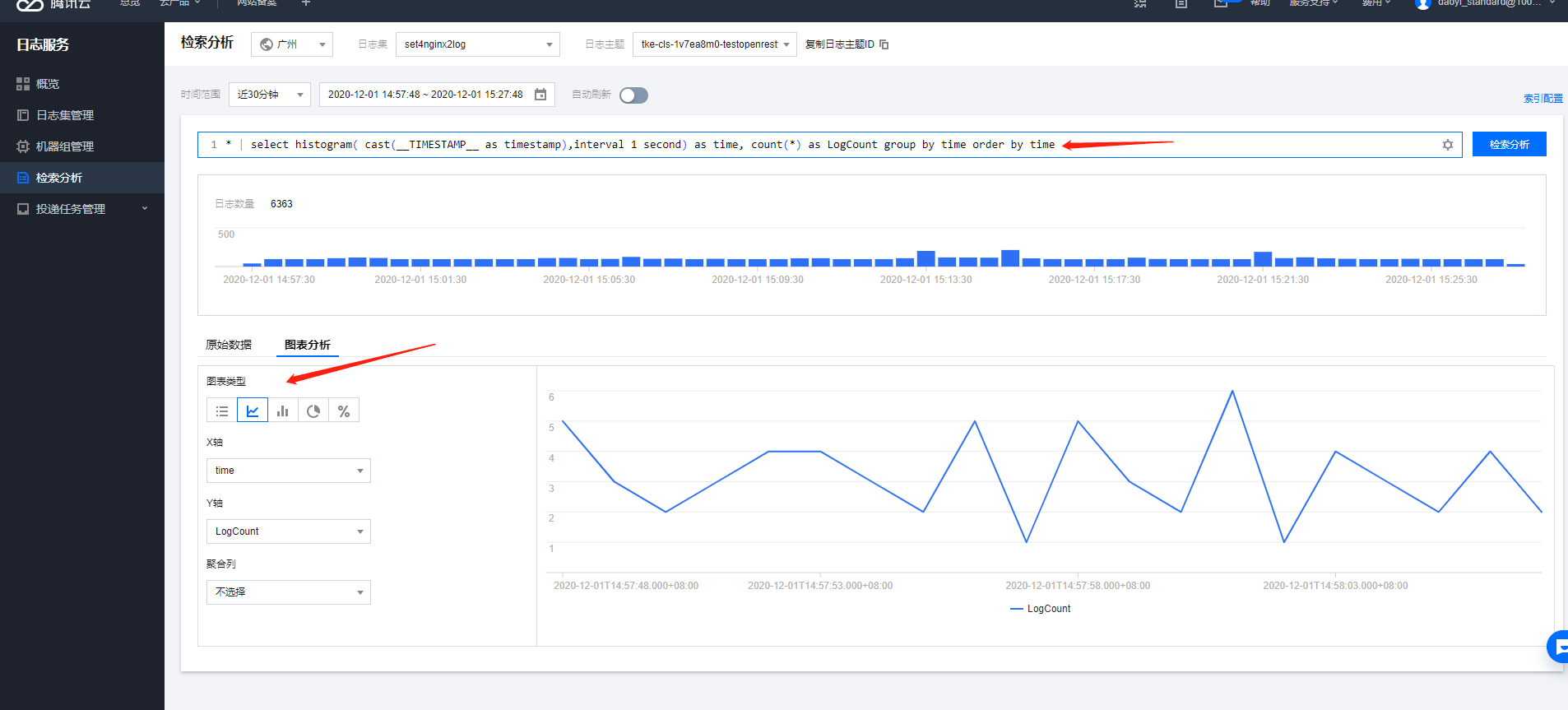

DynamoDB, MongoDB, DocumentDB and many other NoSQL data stores provide similar capabilities. This benefit comes due to the Schema less nature of Elasticsearch data store and is not unique to Elasticsearch. Since the data is stored in “json” format, it allows a schema less layout thereby deviating from the traditional RDBMS-type of schema layouts and allowing flexibility for the json-elements to be changed with no impact on existing data. The “term” document in Elasticsearch nomenclature means “json”- type data. Filebeat can be used as a light weight log shipper and one of the source of data coming over to Logstash which can then act as an aggregator and perform further analysis and transformation on the data before its stored in Elasticsearch as shown belowĮlasticsearch is the NoSQL data store for storing documents. Logstash requires Java JVM and is a heavy weight alternative to Filebeat which requires minimal configuration in order to collect log data from client nodes. Typically you can consider Logstash as the “big daddy” for Filebeat. The question comes when to use Logstash versus Fliebeat. It has inbuilt filters and scripting capabilities to perform analysis and transformation of data from various log sources (Filebeat being one such source) before sending information to Elasticsearch for storage. Logstash is the log analysis platform for ELK+ stack. Filebeat provides many out of the box modules to process data and these modules can be configured with minimal configuration to start shipping logs to Elasticsearch and/or Logstash. You can increase verbosity by setting logging.level: debug in your config file.In the ELK+ stack, Filebeat provides a lightweight alternative to send log data to either the Elasticsearch directly or to Logstash for further transformation of data before its send to Elasticsearch for storage. The logs are located at /var/log/filebeat/filebeat by default on Linux. usr/share/filebeat/scripts/import_dashboards -es You can check if data is contained in a filebeat-YYYY.MM.dd index in Elasticsearch using a curl command that will print the event count.Ĭurl And you can check the Filebeat logs for errors if you have no events in Elasticsearch. This is for Linux when installed via RPM or deb. The path to the import_dashboards script may vary based on how you installed Filebeat. Alternatively you could run the import_dashboards script provided with Filebeat and it will install an index pattern into Kibana for you. So in Kibana you should configure a time based index pattern based on the filebeat-* index pattern instead of logstash-*. It uses the filebeat-* index instead of the logstash-* index so that it can use its own index template and have exclusive control over the data in that index. If you followed the official Filebeat getting started guide and are routing data from Filebeat -> Logstash -> Elasticearch, then the data produced by Filebeat is supposed to be contained in a filebeat-YYYY.MM.dd index.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed